Bridging the Knowledge Gap on Digital Clinical Measures: A Technology Partner’s Perspective on ‘The Playbook’

|

Written by Jeremy Wyatt |

ActiGraph was one of the very first commercial providers of wearable motion-sensing technology, with legacy products dating back to the 1990s. In the years since, innovations in hardware technology, cloud storage and computing, and system interoperability have vastly expanded the capabilities of wearable sensors, adding many new layers of complexity in the process. Although this type of technology has been used and validated in academic research for over twenty years, it’s still relatively new within the world of clinical drug development. And while a myriad of industry publications and resources provide guidance around various aspects of wearable sensors and digital measures, there is still a knowledge gap when it comes to actual implementation of digital measures in a clinical drug trial.

This knowledge gap is part of the reason we love The Playbook here at ActiGraph. Compiled by the Digital Medicine Society (DiMe), this 400+ slide collection has created a shared foundation for remote monitoring and digital clinical measures across research, care, and public health. ActiGraph partnered with DiMe on its second “Tour of Duty” for The Playbook. During this ‘Tour,’ wec ollaborated with our colleagues to build targeted materials that help drive adoption of digital clinical measures in the pharma world. For those who still need convincing, we work collectively with them to identify barriers and challenges, establish ROI models, and develop evidence portfolios. For those partners who are ready to move forward, weequip them further by making the tools in The Playbook easily accessible and applicable to various stakeholder groups across the organization (e.g., clin ops, procurement, etc).

I’ve read through the Playbook several times. Here, I’d like to offer up a few highlights that really get me excited about the opportunity to partner with some of the best and the brightest in the industry to move the needle forward and ultimately help patients live longer, better lives.

Measures, Technology & Operations: Order Matters

The Playbook framework is built around three key foundational processes to ensure successful development and deployment of remote monitoring and digital clinical measures. But it’s not just the processes that are important - the order in which they are approached is a key distinction. “I saw an incredible fitness watch at DIA” is NOT the place to start. The emphasis should be on measures first, then technologies, then operations.

Figure 1 - Order matters when implementing digital measures in clinical research.

Measures

In this industry, it’s clear that we must have a foundational language around digital measures. The Playbook authors do a great job of clarifying the difference between concepts and measures, providing several examples of developing “measures that matter to patients” in the context of connected sensors. As a bonus, we also learn that a measure is both a noun and a verb!

Technologies

This is where most people incorrectly start the discussion. The term “technologies” refers to the hardware (wearable or sensor) and/or accompanying software. Many ActiGraph clients ask about the “device” when referring to the technology; The Playbook gives us good reasons to avoid that term (hint: FDA uses the word “device” for something else). Technology selection involves defining specification requirements and understanding the benefits versus risks of using the technology. We’re reminded of the importance of security and privacy. Technologies often come with privacy agreements and End User License Agreements (EULAs) which, when utilizing a connected device, present an immediate risk. This requires some diligence, which is why it’s important to have a technology partner like ActiGraph to help navigate this area. Most providers in this space leverage a technology ecosystem that consists of the sensor, algorithms, and a patient data platform. These components are crucial for proper execution, but they must be understood and properly vetted.

|

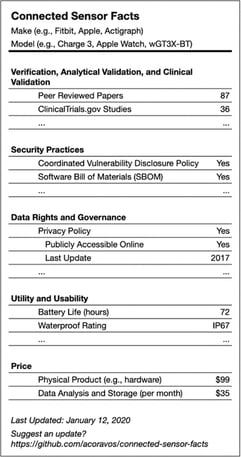

Figure 2 - The five components of fit-for-purpose sensor technology can be thought of as a food label |

We’re often asked about “fit-for-purpose” with regards to our technology products. We really appreciate how the five components of fit-for-purpose are articulated in The Playbook (V3, security practices, data rights & governance, utility & usability, and economic feasibility). It’s important to understand each of these, because when executing a clinical trial, someone is going to care about each aspect somewhere down the line. While we know the FDA does not require the technology be a regulated medical device (yes, I used that word on purpose), it’s important to understand that organizations under FDA’s purview will be required to document many of the pieces of the puzzle that you’re going to need (e.g., verification, validation, safety, and usability). We put together a practical guide to help study teams unpack what the FDA would expect to see in a regulatory submission based on the December 2023 release of their Digital Health Technology guidance, which you can access here.

By the way, if you haven’t read “the V3 paper” (aka Verification, analytical validation, and clinical validation (V3): the foundation of determining fit-for-purpose for Biometric Monitoring Technologies (BioMeTs)), I highly encourage you to check it out here.

Operations

This is easily the most overlooked portion of the remote monitoring process. Most understand that there is an operational component to implementing wearable technology in a clinical study, but oftentimes this step is merged with the technology selection process, which usually muddies up the implementation.

The Playbook makes it clear that an operational deployment plan:

- Is crucial

- Should be a cross-functional team effort (not just the innovation, procurement, or clin ops)

- Should happen before the technology selection is finalized (because issues often arise here, like usability)

Operational considerations are broken into four steps, two before go-live and two after. Working with a technology provider on all the operational aspects that are typically handled by the clin ops team will pay off big time in the long run. For instance, if you don’t have a plan around monitoring and responding to the data (remember, this is usually real-time telemetry data we’re talking about), you’re going to be in trouble when all of your sites start calling. Training is crucial here, and your technology provider should work to customize your training materials to align with the protocol schedule and ensure that they are validated for accuracy, proper translation, etc.

Speaking of all that data, The Playbook mentions the operational considerations around connectivity, bandwidth, software/firmware updates, and firewall related issues. In our early days of servicing the clinical trial industry, we encountered a lot of bumps in the road around these topics. Over the years, we’ve evolved our products to ensure that we can work around most of these challenges, but it’s no surprise that we still see many study teams neglect to plan for these nuances.

One important aspect of operations that isn’t explicitly called out in The Playbook is inventory balance. It’s rare that all clinical sites recruit at the same rate. Your technology deployment plan should take into consideration “load balancing,” wherein the inventory can be moved from one site to another. Otherwise, you could see your technology budget, and your ROI, decrease quickly.

Study close-out is another frequently overlooked area where we have seen a lot of missteps occur. There are numerous important jobs surrounding the end of a study, including technology recovery, hard locking the database, preparing reporting, and getting the final data transfer. You should expect your technology partner to be able to deliver during the crucial end of study timelines.

An Emphasis on Data Quality

The Playbook carves out a special section dedicated to “benchmarking” digital clinical measures. The idea of benchmarking stems from the statistical concept of outliers. Data legitimacy is an important concept, especially when we’re talking about generating confidence in the data. One advantage of this industry’s old methods of evaluation is that they are consistent. When a primary investigator sits down to perform a UDPRS evaluation on a Parkinson’s patient, she is filling out the same form as the other ten investigators in the study. As the landscape of patient care changes, we must properly manage the data to ensure that the boundaries are controlled. Doing so ensures confidence in the data that we are literally staking lives on. Whether it’s identifying if someone put their wearable in the clothes dryer or highlighting an uncharacteristic delta in a patient’s weight as captured from a smart scale, benchmarking serves to produce confidence in the data, and ultimately helps build trust with regulators, patients, and the “unconverted” in our space.

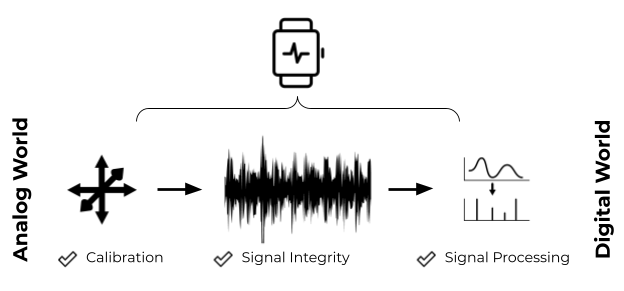

From our perspective at ActiGraph, confidence in the quality of data captured from wearable sensors starts at the bottom with the sensor itself and moves all the way through to the outcome. We live in an analog world that is slowly becoming digital. Properly representing the world that we live in (analog) in the digital realm is an immensely important step, and it’s easy to take that step for granted. The argument in favor of proper verification makes sense when we consider the risk of getting this wrong. Device performance verification is the first step in a three-step process to ensure that we don’t get “garbage in” which will result, every time, in “garbage out.”

Figure 3 - Converting from the analog world to the digital world is an important step that occurs within the context of the sensor or the technology ecosystem and cannot be overlooked.

Here at ActiGraph, we are big proponents of unadulterated raw sensor data. Like the DNA of a living organism, raw sensor data can be considered an asset that lives on beyond its original intent. This gives us and our clients the ability to take a retrospective look at what we may have missed “back then” and also provides a layer of safety should our algorithms (which are usually performed in the “digital world”) have issues that call into question our outcomes.

Next Steps

As we move forward with DiME, our goal is to encourage and facilitate adoption of The Playbook within the clinical research community and beyond. There is so much rich information that can be captured using digital health technology, and the upside is tremendous. At ActiGraph, we believe in the power of sensor data to transform the landscape of clinical studies and provide new and exciting insights into patient behaviors. And who knows, maybe the next gold standard measurement is out there waiting to be found.